Enterprise Model Lifecycle Management

Operate AI models like Kubernetes, built for production inference.

Register, version, test, release, monitor, and optimize models through a single control plane. ModelMesh gives platform teams deterministic governance from sandbox to global traffic.

Trusted design patterns inspired by leading AI and cloud platforms

Why Teams Switch

The operational gap in AI model delivery

Most organizations still ship models with disconnected scripts, no release policy, and no financial observability.

Version Drift Across Teams

Different squads deploy untracked variants, creating compliance and debugging risk in regulated environments.

Unsafe Rollouts

Without native canary and rollback policy, minor regressions spread across production before alerts trigger.

No Unified Telemetry

Latency, hallucination rates, and spend are measured in separate tools with no lifecycle correlation.

Unpredictable Cost Curves

Compute, token, and GPU costs rise faster than traffic due to missing model-level routing intelligence.

Lifecycle Engine

From model registration to autonomous rollback

Policy-driven stages enforce quality and cost constraints before each traffic transition.

-

01

Registry + Provenance

Every model artifact is signed, tagged, and attached to dataset lineage metadata.

-

02

Validation Gates

Offline accuracy, online replay, and bias checks run before promotion to staging.

-

03

Canary + Gradual Release

Traffic moves across lanes with live thresholds for latency, quality, and business impact.

-

04

Continuous Monitoring

Model drift, prompt safety, and reliability metrics stream to a unified control dashboard.

-

05

Auto-Rollback or Promote

Policy engine reverts failing versions or increases exposure for high-performing revisions.

Progressive Delivery Topology

Policies enforce p95 latency < 180 ms, quality delta < 0.8%, and cost delta < 12% before every promotion.

Core Capabilities

Everything needed for enterprise AI runtime governance

Model Registry API

Immutable model snapshots, metadata versioning, and audit trails built into every commit.

Release Policy as Code

Define routing, rollback, and compliance checks in declarative lifecycle specs.

Canary and Blue-Green

Run controlled experiments with user-segment targeting and instant traffic reversibility.

Unified Performance Telemetry

Track latency, throughput, quality, and drift for each model version in real time.

Cost Intelligence Layer

Analyze token, GPU, and infra spend per endpoint with automated optimization playbooks.

Multi-Cluster Federation

Coordinate deployments across clouds and regions from one enterprise control plane.

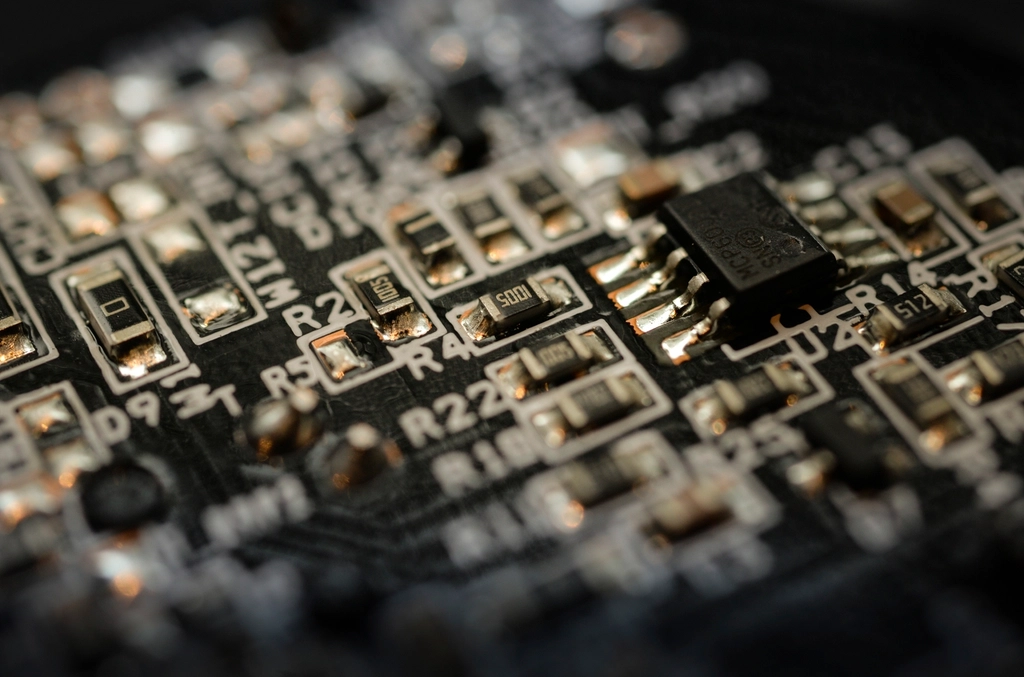

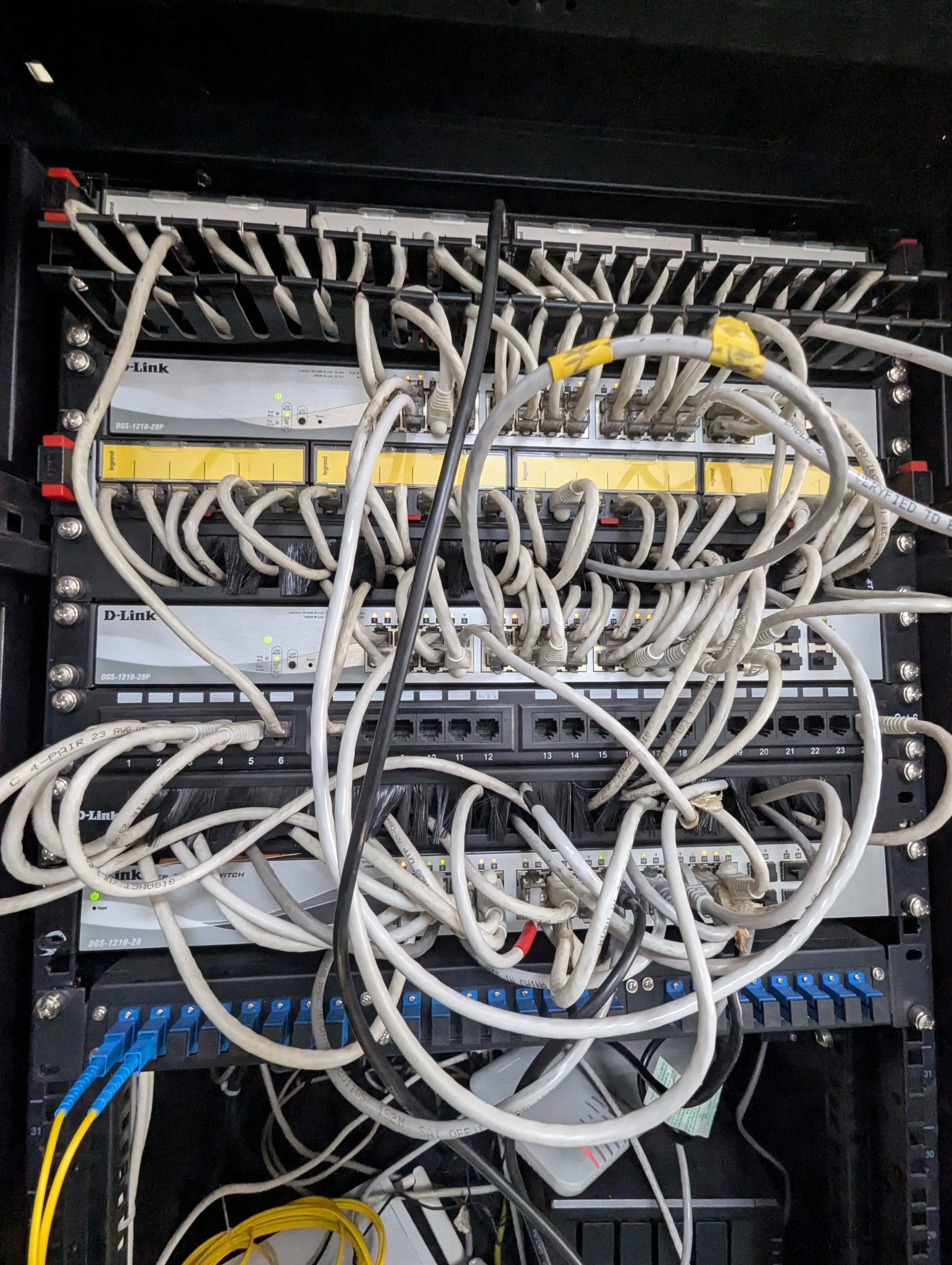

Platform Visuals

Operational surfaces for model infrastructure teams

Purposeful visual language for high-stakes AI operations, tuned for enterprise communication and technical credibility.

Multi-cluster Runtime

Cross-region inference orchestration with immutable version lanes and deterministic rollback boundaries.

Performance Optimization

Fine-grained GPU, memory, and route-level tuning across model variants.

Traffic Reliability

Canary and failover channels monitored with real-time policy thresholds.

Use Cases

Single platform, multiple AI delivery patterns

Model-level control for fraud and risk scoring

Deploy incremental versions with regulator-ready audit logs and strict rollback guarantees for false-positive spikes.

- Segment canary by transaction type and region

- Version lineage linked to training datasets

- Automated rollback from business KPI thresholds

Clinical safety constraints in every release lane

Gate model updates with quality benchmarks and reliability checks before touching patient-facing workflows.

- Policy-driven promotion across hospital clusters

- Explainability metrics tracked by model version

- Drift alerts mapped to triage outcomes

Optimize recommendation quality and spend together

Run A/B experiments with live cost envelopes to improve conversion while controlling inference costs.

- Traffic shaping by user segment and channel

- Latency budgets per personalization endpoint

- Automatic fallback to efficient model variants

Central command for platform engineering teams

Unify lifecycle workflows across business units without forcing every team to rebuild release infrastructure.

- Cross-cluster deployment templates

- Centralized SLA and SLO monitoring

- Cost and quality scorecards for each team

Performance

Operational benchmark

ModelMesh aligns quality, latency, and release confidence in one runtime governance loop.

| Metric | ModelMesh | Typical Scripted Pipeline |

|---|---|---|

| Rollback Time | < 15 sec | 5 - 30 min |

| Version Traceability | Full lineage | Partial / manual |

| Canary Automation | Policy-native | Custom scripts |

| Cost Visibility | Per model version | Per cluster only |

Cost Intelligence

Spend optimization simulator

Estimate savings when routing non-critical traffic to efficient model versions.

Talk to the Platform Team

Plan your enterprise rollout

Share your current model delivery stack. We will map a migration plan for registry, release safety, and cost governance.

- Architecture review for model registry and release policy design

- Deployment plan for canary strategy and rollback automation

- Cost-control model for traffic shaping and inference tiering

Typical onboarding workshop: 90 minutes with platform, ML, and security stakeholders.